In today’s healthcare marketing landscape, data is everywhere, but insight is elusive. From campaign performance and broker interactions to claims and clinical records, the sheer volume of data should be a competitive advantage. Instead, fragmented systems, siloed reports, and disconnected teams often result in more confusion than clarity.

Whether you’re focused on B2B or B2C, this challenge is widely understood. Most marketers don’t lack awareness; they’re already making moves to fix it. Unfortunately, that’s where many are encountering their next problem.

In an effort to unify customer data and power smarter campaigns, many teams invested in Customer Data Platforms (CDPs), viewing them as the silver bullet. But the promise hasn’t matched the reality.

CDPs were built to activate, not to analyze. Most struggle to provide true cross-channel visibility, AI-ready insights, or the depth of performance tracking required by modern go-to-market teams and c-suite stakeholders. We’ll explore these needs in detail in just a moment, but first, it’s worth understanding why the CDP model is breaking down.

Traditional CDPs promised a single source of truth, but delivered it in a rigid, vendor-controlled box. They’re often expensive, difficult to adapt, and optimized for short-term execution rather than long-term agility. As marketing stacks evolve, these singular platforms become bottlenecks, limiting integration, innovation, and insight.

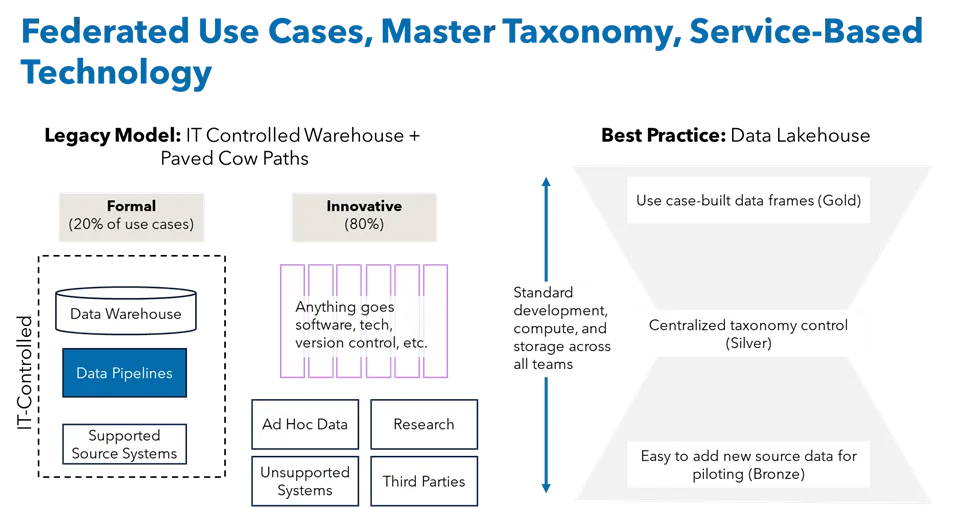

Introducing Composability: A Smarter, More Strategic Path Forward

Composability offers a fundamentally different approach. Instead of relying on one vendor or platform to do it all, composable data architecture lets marketers build and evolve their own stack—piece by piece—based on changing needs.

Think of it like LEGO bricks. Marketers can connect best-in-class tools for campaigns, analytics, and CX while maintaining a centralized, AI-ready data layer. Composability empowers marketers to innovate without ripping and replacing systems, while still ensuring their data is unified, structured, and usable across teams.

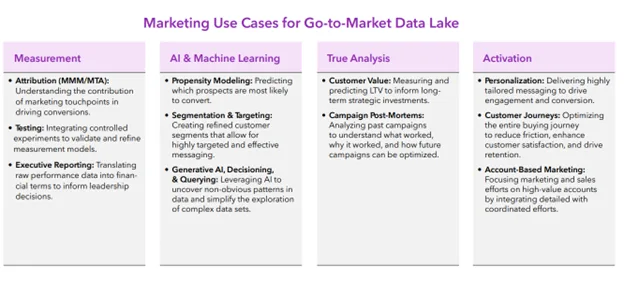

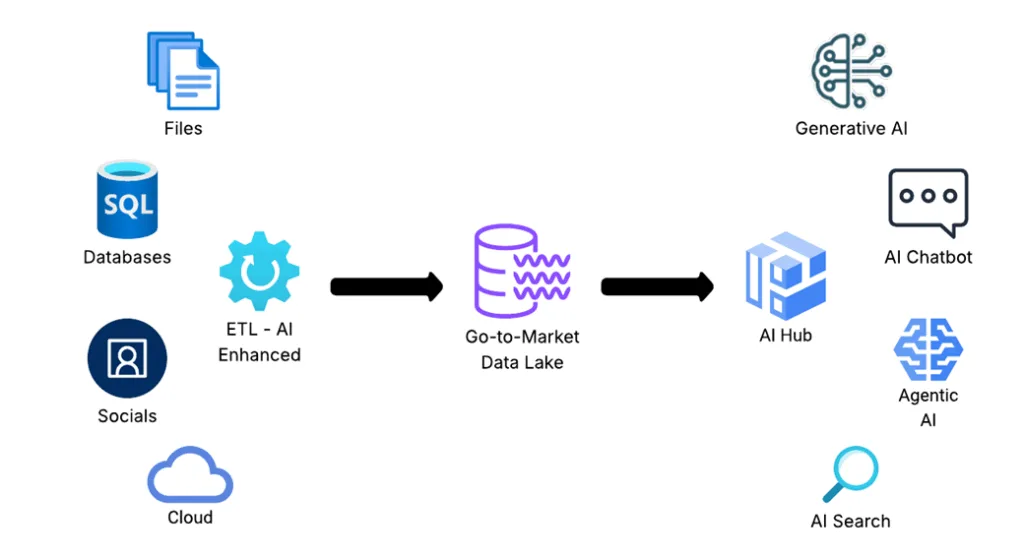

At Marketbridge, we call this centralized foundation the Go-to-Market Data Lake (GTMDL): a composable environment that integrates marketing, sales, CX, operations and even clinical data to fuel attribution, personalization, and growth.

And while the architecture matters, strategy matters more. That’s why we help healthcare marketers not only build GTMDLs but also design the right data strategy to make them valuable.

Three Essential Components of a Modern Go-to-Market Data Strategy

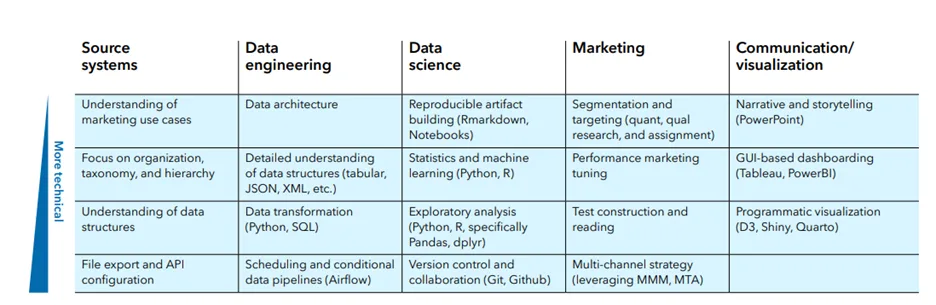

To unlock the full potential of a composable architecture, you need more than the right tools, you need a strong strategic foundation. These three elements are the building blocks of a future-proof data strategy for healthcare marketers:

1. Marketing Attribution Models

Attribution remains one of the most challenging aspects of healthcare marketing. At its core, it’s about understanding what’s working, what’s not, and why. In today’s complex, multi-touch, multi-channel customer journeys, that clarity is hard to come by.

Every interaction matters. From paid search to sales outreach to out-of-home campaigns, each touchpoint influences the decision-making process. Yet most marketers still can’t answer critical questions like:

- Which campaigns are actually driving conversions?

- Are we over-investing in one channel and ignoring others?

- What is the true ROI of our brand or media spend?

- Which messages resonate with specific audiences?

The absence of visibility leads to misallocated budgets, inconsistent performance, and decisions based on intuition rather than data. Although improved dashboards can assist, they are insufficient on their own.

This issue becomes even more critical when marketing leaders must demonstrate their impact on the C-suite. Executives primarily focus on business outcomes rather than specific channel metrics.

Without attribution models that connect marketing investments to clinical engagement, member retention, or revenue growth, marketing efforts are often viewed as a cost center rather than a driver of growth. The pressure to prove ROI in measurable, financial terms has never been higher.

Solving attribution requires a unified data foundation and models that reflect real-world behaviors, not just last-touch conversions. Tools alone cannot connect every part of the customer journey or account for external variables like competition, compliance, or seasonality. That is why advanced models, such as Marketing Mix Modeling (MMM) and Multi-Touch Attribution (MTA), are essential to a modern marketing strategy. They move attribution from reactive reporting to proactive planning and provide the kind of defensible, business-aligned insights that resonate with senior leadership.

2. Taxonomy

Taxonomy may not be flashy, but it’s the quiet engine behind every successful data strategy.

Simply put, taxonomy is the consistent naming, tagging, and classification of data across systems. Without it, even the most sophisticated models and tools fail. In healthcare, where data flows from CRMs, marketing automation platforms, claims databases, and EMRs, inconsistency is the enemy. The same channel might be labeled “DM,” “Mailer,” or “Offline Touch” making measurement, automation, and analytics nearly impossible.

A clean, governed taxonomy enables:

- More Accurate Attribution: Consistent tags let you connect touchpoints to outcomes with confidence.

- Stronger AI Models: Clean, labeled data is essential for machine learning and predictive analytics.

- Better Collaboration: When Sales, Marketing, CX, and IT speak the same data language, you eliminate misalignment and confusion.

Ultimately, taxonomy turns raw data into structured, queryable insights. Without it, your composable stack cannot function effectively, no matter how advanced the architecture.

3. Data Integration and Accessibility

Even with strong models and a clean taxonomy, your strategy stalls without seamless integration and access to data.

In healthcare, valuable insights are often buried in disconnected systems. A claims system captures utilization, a marketing platform tracks member engagement, a CRM tracks sales outreach, and none of it talks to each other. The result? Manual workarounds, data gaps, and missed opportunities.

Composable architecture solves this by connecting systems through APIs and microservices. It allows you to:

- Centralize and Normalize Data in Real Time: Build a unified view without replacing every tool in your stack.

- Act on Data Immediately: Marketers can analyze and optimize campaigns without waiting weeks for reports.

- Personalize at Scale: Trigger outreach based on real-time behavior, clinical activity, or enrollment milestones.

- Minimize IT Bottlenecks: Provide governed access to marketers and analysts while maintaining compliance and security.

Integration is the connective tissue of a data strategy. Without it, even the best models and insights stay locked inside silos.

The Next Era of Healthcare Marketing Starts with Data Strategy

Healthcare marketers are navigating one of the most complex data environments in any industry. The stakes are high, and so is the pressure to deliver measurable outcomes. But as tempting as it is to chase the next big platform, lasting success comes from something deeper: a modern data strategy that aligns teams, tools, and tactics around shared goals.

A composable approach gives you the flexibility to evolve as your business grows, the clarity to connect action to outcome, and the control to move fast without breaking compliance or collaboration. But the real power lies in how you use it. In healthcare, the ROI of better data isn’t just improved marketing and sales performance; it’s healthier members, reduced churn, and stronger care engagement. A strategic approach to data is what turns fragmented insights into meaningful action that drives both clinical and business impact.

At Marketbridge, we help healthcare organizations move beyond disconnected tools to build integrated, insight-driven systems that support real business outcomes. From attribution models and clean taxonomy to full data integration, we bring strategy, structure, and execution together—so marketers can finally do what they’ve always wanted: make smarter decisions with confidence.

Because in the end, it’s not about collecting more data. It’s about putting it to work.

Download the whitepaper, “Building a composable go-to-market data stack”

Rethink your data foundation and lead the next era of AI-ready, insight-driven marketing.